The 10-Year-Old Summa-Cum-Laude Graduate of World’s Finest University

… all brains and knowledge, but zero experience.

If you ever wonder, why you fail with common AI, keep in mind that AI has all the academic knowledge, but doesn’t really know what to do with it. And in the best case (only then), it applies it as it’s called “academic”, theoretically perfect, if not to say “idealistic”. But without the slightest idea of the difference between theory and real world.

“Smart” AI companies add excessive RAG-knowledge to bridge the gap between the training-freeze date, they use “smart” web search to update the information since the freeze of the LLM (AI knowledge base) and today. Or adding industry or company specific information aplenty.

Then they “hedge it” (cage might be the better word) into an agentic framework to avoid hallucination; inventing answers when there are none. And recognizing such and the underlying “autogressive commitment”, they reset the AI. Whatever it learned until then, in most cases is gone, you’re back to the 10-year-old summa-cum-laude graduate.

A Lesson From Aviation

Discussing with Daniel, KieuAnh and others, the most common mistake is that we believe the AI to be omniscient, knowing it all. But knowledge is only half the battle. It’s useless without experience. Would you take a summa-cum-laude graduate, fresh from university into an airline operation center and hand over decision making? No? Hmm. But many managers (and media) imply exactly that.

Daniel focuses on the 2 a.m. disruption, five aircraft, 1,000 passengers grounded. A recent study said passengers don’t mind a disruption. They mind about not being informed, feeling left alone. When their mobile tools give them different information than the staff on site trying to help them. When processes are disrupted by 10 different systems giving 12 different answers about “reality”.

Daniel calls it articulate intelligence, but that is looking at status quo, where I believe we are where the web was 1996, two years after it got public. Belittled, laughed at, “something for academics and freaks” I was told in 1996, when I recommended to register the companies’ “domain names”. Or same year, the GDS emphasizing that it’d be “impossible” to book their services on the web – less than three months later we signed with Siemens. 1997 we were their shining example they could do web (screen scraping times). Same hype now than in 1998/99. The bubble will implode sooner or later, but AI is here to stay.

The AI Amplifier

Garbage in … Garbage out

The old saying. This is more than 10 years old, before Thorsten Dirks joined Lufthansa he was President and CEO of Telefonica Germany. So that was pre-aviation, but very much on mobile communication and web.

The old saying. This is more than 10 years old, before Thorsten Dirks joined Lufthansa he was President and CEO of Telefonica Germany. So that was pre-aviation, but very much on mobile communication and web.

Most aviation processes are built on 1960s technology. Basic fact: Aviation cannot write Jürgen. It’s JUERGEN. Because it’s all very down under layers and layers to “upgrade” the core process … that very core process is a 6-bit system. 64 characters. Minus A-Z = 38. Minus 0-9 = 28. An availability call is A (or on the original Sabre 1), a phone field is 9, a name is -, 7 is ticketing information, etc. The system itself needs also some of those command, like $ for print.

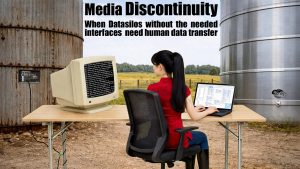

Data Silos – Silo Thinking

Most of aviation is silo thinking, resulting in fragmented and inconsistent data; SITA senior managers talk about “the Source of the Most-Common Truth” and take that problem as a given. There are tools like DTPs tNexus, “managing” the non-standard-use of i.e. Standard Schedules Information Manual data. Say what? Yes, all kinds of players (software, airline, others) think that the “Standard” is not good enough for them. Instead of enhancing it, they simply ignore it and modify to their liking. Not understanding that it causes trouble for anyone outside their little bubble. Embarrassing.

Most of aviation is silo thinking, resulting in fragmented and inconsistent data; SITA senior managers talk about “the Source of the Most-Common Truth” and take that problem as a given. There are tools like DTPs tNexus, “managing” the non-standard-use of i.e. Standard Schedules Information Manual data. Say what? Yes, all kinds of players (software, airline, others) think that the “Standard” is not good enough for them. Instead of enhancing it, they simply ignore it and modify to their liking. Not understanding that it causes trouble for anyone outside their little bubble. Embarrassing.

Developing A-CDM, DFS, one of the largest European ANSP (air traffic control operator) demanded that all the operational A-CDM data must be pushed to them. Asked for return feed to make sure their changes are reflected in the Airport Operations Control Center, they’ve raised a paywall that made it all but impractical. Asked why, the statement was that they’d have to change their system to create such a data push. So instead of enabling the future, they decided to hide it behind a paywall. Check their AIP data, they are one of three ANSPs hiding them behind a paywall.

Evolution

Discussing with the academics working on a next gen LLM to overcome the known issues of autogressive commitment and the necessity for constant commitment, I asked my usual “stupid questions”. It’s now the pet project I develop.

![]()

… we use contemporary tools to allow AI to take time to respond. And to say “I don’t know”. That is possible today without changing the LLM (i.e. pydantic-ai).

… we enable them create teams that discuss the problem, qualify different ideas, ask questions, research, learn (i.e. CrewAI).

… we let them create their own RAG, with information on failures and successes?

… we give them space to breath, learn and remember?

What if those 10-year-old graduates learn?

With the help of two of the professors, I currently try to answer that question on a prototype server. So far we are confident that this will be very different from usual AI setups.

Food for Thought

Comments welcome

P.S.: If you are interested, I don’t need money, but a GPU-powered AI-server is expensive, if someone wants to sponsor one with a potent AI-“Graphics Card” and 64GB VRAM. I can give it back if my little project fails. Else, you’d get a front seat 😇