Incompatible Data

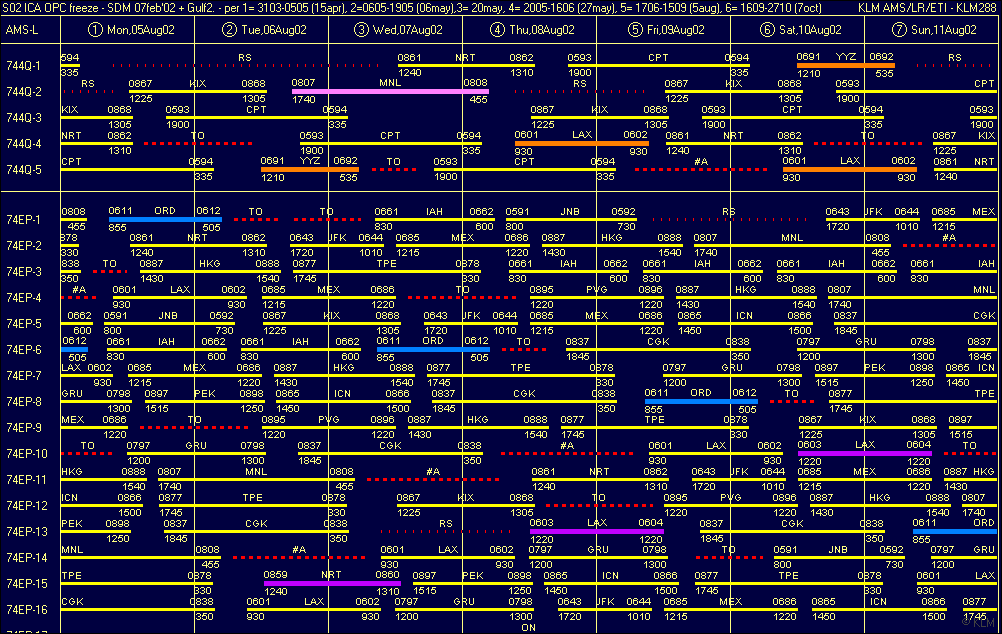

Mark from OAG directed my attention this week on OAG’s Punctuality League, which they offer for free download and compiled the results in a “dashboard”, though I find that exceptionally unintuitive and more confusing than helping. FlightStats offers a similar information in tables and graphs I find far more intuitive, the On-Time Performance Awards.

Mark from OAG directed my attention this week on OAG’s Punctuality League, which they offer for free download and compiled the results in a “dashboard”, though I find that exceptionally unintuitive and more confusing than helping. FlightStats offers a similar information in tables and graphs I find far more intuitive, the On-Time Performance Awards.

Now after a quick first look, it shows already that it’s incompatible.

I just look at the first OAG graph “Top 20 Airlines by LCCs/Mainline Airlines”.

- Hawaiian Airlines (89.87%)

- Copa Airlines (88.75%)

- KLM (87.89%)

and compare to FlightStats, where Hawaiian neither shows in the Top 10 International Airlines nor Major Airlines (neither Mainline nor Network), but only Top 1 on Regional Airlines. KLM is 1st on International Network flights and 4th on mainline flights.

When I first encountered the FlightStats monthly statistics for airlines and airports, I’ve contacted them (with no reply) if I may add that as an indicator to our airport data. As I consider that valuable information for aviation network planners.

But as I stumble immediately over differences, it raises question. Such, it might be a good idea if OAG and FlightStats talk to each other to make sure they use the same data, and logic before they dig into detail. Or that they explain how they value the data and interpret it. As is, there are unexplained differences. Sorry, now I distrust both sources…?

Indicator. Indicator?

It can only be an indicator, as both sources fail to relate the one to the other. My first question would be to correlate the on-time performance to the hub airlines. Because it is utterly unfair to blame an airport, if their major hub airline is notoriously late.

It can only be an indicator, as both sources fail to relate the one to the other. My first question would be to correlate the on-time performance to the hub airlines. Because it is utterly unfair to blame an airport, if their major hub airline is notoriously late.

Then one shall also keep the size of an airport and it’s congestions in mind, i.e. British Airways suffering from congestions in London-Heathrow or Thai Airways in Bangkok. Who is cause? Who is victim?

Yes, for CheckIn.com we emphasize that all that data can only be indicators. To be interpreted by an experienced network planner. Because a single new flight makes a major impact on a new or small airport, but has little statistical relevance on a major hub. Saying that, isochrones are in itself valuable statistical data and we put them into our analyses for a reason. As they are a necessity in comparison with the catchment area analysis to interpret the possible impact for a route. In forecasting, you work with indicators, you have no facts.

Yes, for CheckIn.com we emphasize that all that data can only be indicators. To be interpreted by an experienced network planner. Because a single new flight makes a major impact on a new or small airport, but has little statistical relevance on a major hub. Saying that, isochrones are in itself valuable statistical data and we put them into our analyses for a reason. As they are a necessity in comparison with the catchment area analysis to interpret the possible impact for a route. In forecasting, you work with indicators, you have no facts.

Big Data – Big Trouble

At the same time you work with big data, so the more data you work with, the more vital it is to get them from a sound source and have them integrated into a common system. Whereas most established data providers, be it OAG, Flight Stats, SITA, etc. have not yet addressed that for a “good reason”. But as an industry, it is vital we add this and integration is very high on our back log at CheckIn.com of what we where we want to go!

At the same time you work with big data, so the more data you work with, the more vital it is to get them from a sound source and have them integrated into a common system. Whereas most established data providers, be it OAG, Flight Stats, SITA, etc. have not yet addressed that for a “good reason”. But as an industry, it is vital we add this and integration is very high on our back log at CheckIn.com of what we where we want to go!

For the time being, national statistics differ from Eurostats, differ from aviation industry statistics, differ from common sources. These differences in data you get from FlightStats and OAG just being an example that this is also an issue in aviation. Who’s right? I even have examples where the numbers figure within an airport’s own website for a given year. In order to improve, we got to tear down the walls! And yes, that’s part of what I will talk about at coming Passenger Terminal Conference & Expo in March. Will you be there? Please let us meet!

Rotational Impact

So. Why do I give these on-time-performance, no those delay statistics so much thought? Aside the cost of delays summing up to millions, they are not just a nuisance, but a problem. Because when I did that additional case study on cost savings, based on the Zurich Airport’s deicing I did for SAE G12 and WinterOps.ca, I learned an important fact from Swiss (the airline). Whereas the passengers impacted by the immediate flight understand the problem and accept higher force, the aircraft is not operating a single flight, but an entire rotation (a chain of flights) during the day/week. Any major delay has a rippling effect in the network. And if you have a snow-caused delay in the morning in Zurich, your passengers on the evening flight from the Mediterranean summer vacation will not understand and file for compensation. And the airline usually pays!

So. Why do I give these on-time-performance, no those delay statistics so much thought? Aside the cost of delays summing up to millions, they are not just a nuisance, but a problem. Because when I did that additional case study on cost savings, based on the Zurich Airport’s deicing I did for SAE G12 and WinterOps.ca, I learned an important fact from Swiss (the airline). Whereas the passengers impacted by the immediate flight understand the problem and accept higher force, the aircraft is not operating a single flight, but an entire rotation (a chain of flights) during the day/week. Any major delay has a rippling effect in the network. And if you have a snow-caused delay in the morning in Zurich, your passengers on the evening flight from the Mediterranean summer vacation will not understand and file for compensation. And the airline usually pays!

And for network planning, it is vital to know if you have to build in (expensive) buffers into your schedule, to cover up for the potential delays. That means your aircraft and especially crews are not airborne as much as they could be, such causing further loss of revenue. There is a very good reason airlines increasingly add clauses in the handling contracts with the airports punishing for creating delays and rewarding for reducing such. Being said to be an expert in winter ops planning, it’s bad enough about technical or natural (weather) delays. But yes, delays are also caused by aviation management, be it handling agent, airline operations or air traffic control.

A Summary…

So what now. I think the availability of delay statistics is compelling, useful and needed. But take them with care, as you take all statistics. Try to understand how they are computed, the logic behind and ask your provider accordingly. Yes, that includes our own. That’s why we publish the CheckIn.com methodology. Only if you understand it, you can yourself interpret it. Trust it.

We got to understand in our industry the value of data and common data structures. A delay is a delay? Nonsense. As I mentioned back three years ago in the article about A-CDM.

And I distrust any “closed source” company that does not provide me with their methodology on their analyses. Like many airports do. On the other side, at CheckIn.com, the value is not really the methodology (which is sound), it’s the work that is behind it, the compilation of data from different sources, the constant improvements we give that. Only given sound data, we can provide quality analyses. Given the quality data, anyone can come up with more or less professional analyses. Even to come up with the calculations we do to calculate an airport’s impact on a traveler’s likeliness to choose the one or other airport can be replicated. Though no, we don’t explain in detail how we do it, but the general concept. The hard work we spend every day to merge data from different sources, to cover for mistakes and other short-comings – that makes our work so hard to copy… And is a main part of our USP (Unique Selling Proposition), what makes us “unique”.

Food For Thought

Comments welcome!

![“Our Heads Are Round so our Thoughts Can Change Direction” [Francis Picabia]](https://foodforthought.barthel.eu/wp-content/uploads/2021/10/Picabia-Francis-Round-Heads-1200x675.jpg)

Back in 2000, in my

Back in 2000, in my

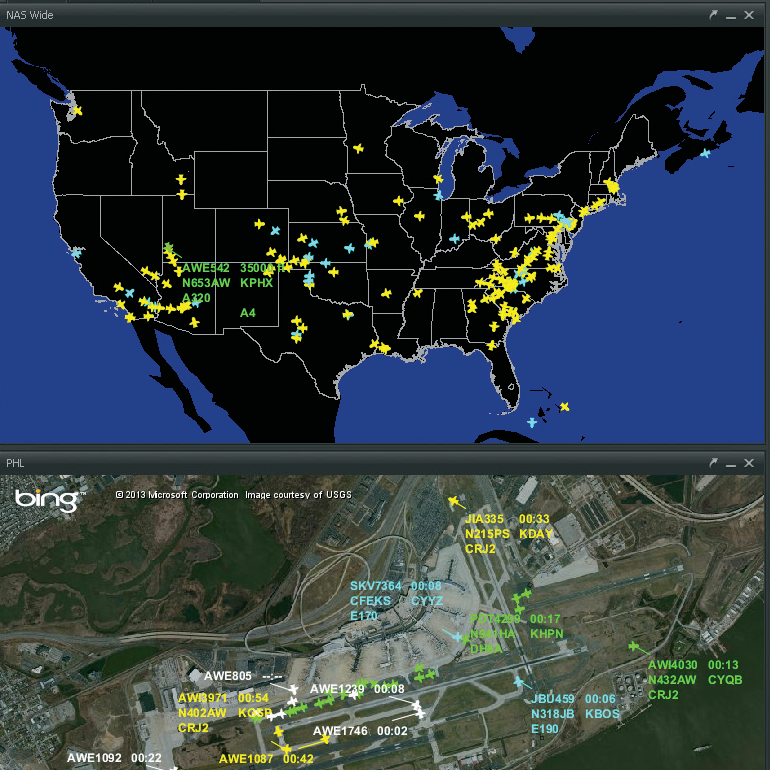

Having been pacemakers in e-Commerce, aviation today is light years behind other industries. U.S. tools showing aircraft in-flight on maps like Harris Corp. (Exelis)

Having been pacemakers in e-Commerce, aviation today is light years behind other industries. U.S. tools showing aircraft in-flight on maps like Harris Corp. (Exelis)  The last weeks the messages on LinkedIn, hyping the “Internet of Things” (

The last weeks the messages on LinkedIn, hyping the “Internet of Things” (